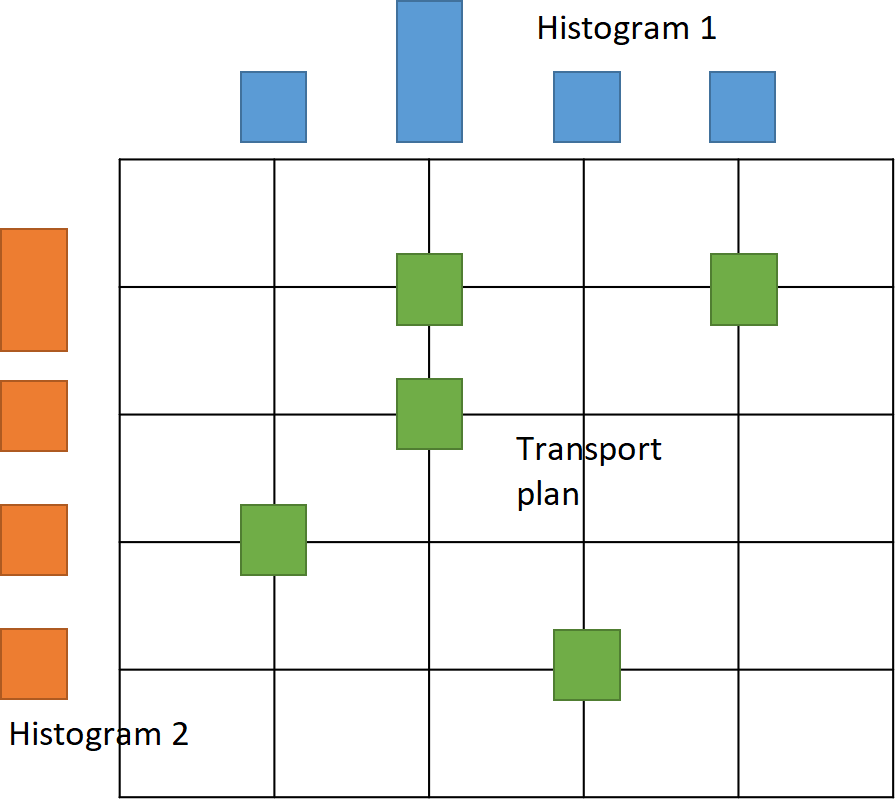

Optimal transport, also known as the transportation problem, is a mathematical framework used to measure the dissimilarity between two probability distributions. It originated from the field of operations research in the mid-20th century but has found applications in various fields including economics, computer vision, machine learning, image processing, quantum chemistry, diffusion processes, and many more. It has been very influential in connecting partial differential equations, geometry, and probability. In the context of probability distributions, optimal transport aims to find the most cost-effective way to transform one distribution into another. Each distribution is considered as a collection of resources or mass, and the transportation cost is determined by a distance metric or a cost function between these masses. The optimal transport problem seeks to find a transport plan, i.e., a probability measure on the product space, that minimizes this cost, effectively "moving" the mass from one distribution to another in the most efficient manner possible. One of the most common distance metrics used in optimal transport is the Kantorovich-Wasserstein distance (also known as the Earth Mover's Distance), where the cost function is given via the Euclidean distance

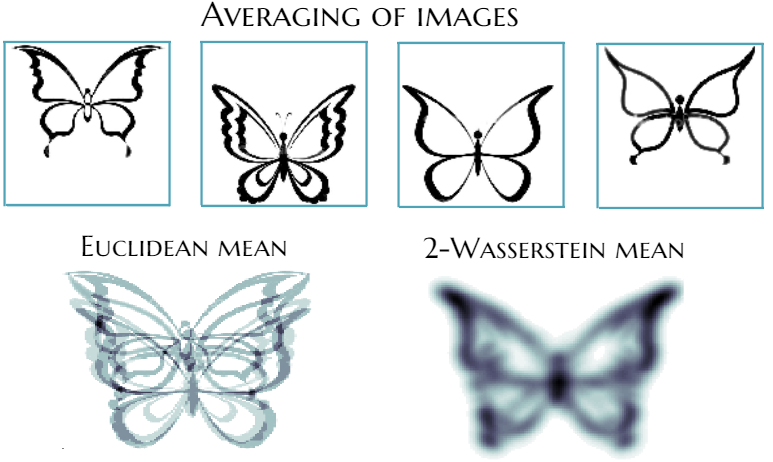

Consider grayscale images where each pixel represents a mass. By normalizing pixel intensities, discrete probability measures on a pixel grid arise. The transportation cost between pixels is the squared Euclidean distance. The goal is to transport one image to another using a transportation plan represented by a matrix. The minimum transportation cost, found by varying the plan, gives the Kantorovich-Wasserstein distance, a form of optimal transport distance. This distance is applicable to various objects beyond images but involves intensive computations. Despite this, it captures object geometry well compared to simple distances like Euclidean. The Wasserstein barycenter defines the mean probability measure of a set of measures by minimizing the sum of squared Kantorovich-Wasserstein distances to all measures in the set. Besides images, the probability measures or histograms can model other real-world objects like videos, texts, genetic sequences, protein backbones, etc.

Histograms and transportation plan (left). Euclidean and 2-Wasserstein barycenter of images (right).

Statistical Optimal Transport

Quite often modern data-sets appear to be too complex for being properly described by linear models. The absence of linearity makes impossible the direct application of standard inference techniques and requires a development of a new tool-box taking into account properties of the underlying space. At WIAS, an approach based on optimal transportation theory was developed that is a convenient instrument for the analysis of complex data sets. This includes the applicability of the classical resampling technique referred to as multiplier bootstrap for the case of 2-Wasserstein space. Moreover, the convergence and concentration properties of the empirical barycenters are studied, and the setting is extended to the Bures-Wasserstein distance. The obtained theoretical results are used for the introduction of Gaussian processes indexed by multidimensional distributions: positive definite kernels between multivariate distributions are constructed via Hilbert space embedding relying on optimal transport maps.Computational Optimal Transport

Calculating the Wasserstein barycenter of m measures is a computationally hard optimization problem that includes repeated computation of m Kantorovich-Wasserstein distances. Moreover, in the large-scale setup, storage, and processing of transportation plans, required to calculate Kantorovich-Wasserstein distances, can be intractable for computation on a single computer. On the other hand, recent studies on distributed learning and optimization algorithms demonstrated their efficiency for statistical and optimization problems over arbitrary networks of computers or other devices with inherently distributed data, i.e., the data is produced by a distributed network of sensors or the transmission of information is limited by communication or privacy constraints, i.e., only a limited amount of information can be shared across the network.

Evolution of local approximation of the 2-Wasserstein barycenter on each node in the network for von Mises distributions (left), images from MNIST dataset (middle), brain images from IXI dataset (right). As the iteration counter increase, local approximations converge to the same distribution which is an approximation for the barycenter. Simulations made by César A. Uribe for the paper P. Dvurechensky, D. Dvinskikh, A. Gasnikov, C. A. Uribe, and A. Nedic. Decentralize and randomize: Faster algorithm for Wasserstein barycenters. In S. Bengio, H. Wallach, H. Larochelle, K. Grauman, N. Cesa-Bianchi, and R. Garnett, editors, Advances in Neural Information Processing Systems 31, NIPS'18, pages 10783-10793. Curran Associates, Inc., 2018. arXiv:1802.04367.